MediaMorph Edition 95 - by HANA News

McGill study - "AI models do not attribute news sources 82 per cent of the time"

Was this newsletter forwarded to you? Sign up here

The written-by-a-human bit

A new study from McGill University's Centre for Media, Technology and Democracy tested 2,267 Canadian news stories against four major AI models, ChatGPT, Gemini, Claude and Grok, and found that all four drew extensively on Canadian journalism to answer questions about current events. The models did not provide source attribution about 82 per cent of the time.

As the McGill team put it: "Links provide a pathway back to the source, but consumers reading the response itself rarely see an indication of whose journalism they are consuming."

(Note to self: here at Hana News, we attribute 100% of the time)

The conversation about AI and news has spent years circling the training-data question: was our archive scraped, and was that legal? That argument is still live in courtrooms from Ontario to Manhattan. But the McGill study shifts the focus to something more awkward. It is not just that journalism was ingested. It is that the resulting product can satisfy the reader's need without the reader ever encountering the publisher.

Search engines sent traffic. Answer engines clearly do not.

The consumer's need to visit the source is, in the brief's words, "rendered unnecessary by the AI's response itself”.

A link buried beneath a verbose, self-contained answer is not the same as a source relationship. If the user gets the facts, the framing and even the narrative arc without leaving the chatbot, then the publisher has lost more than a pageview. It has lost branding, visibility and credibility.

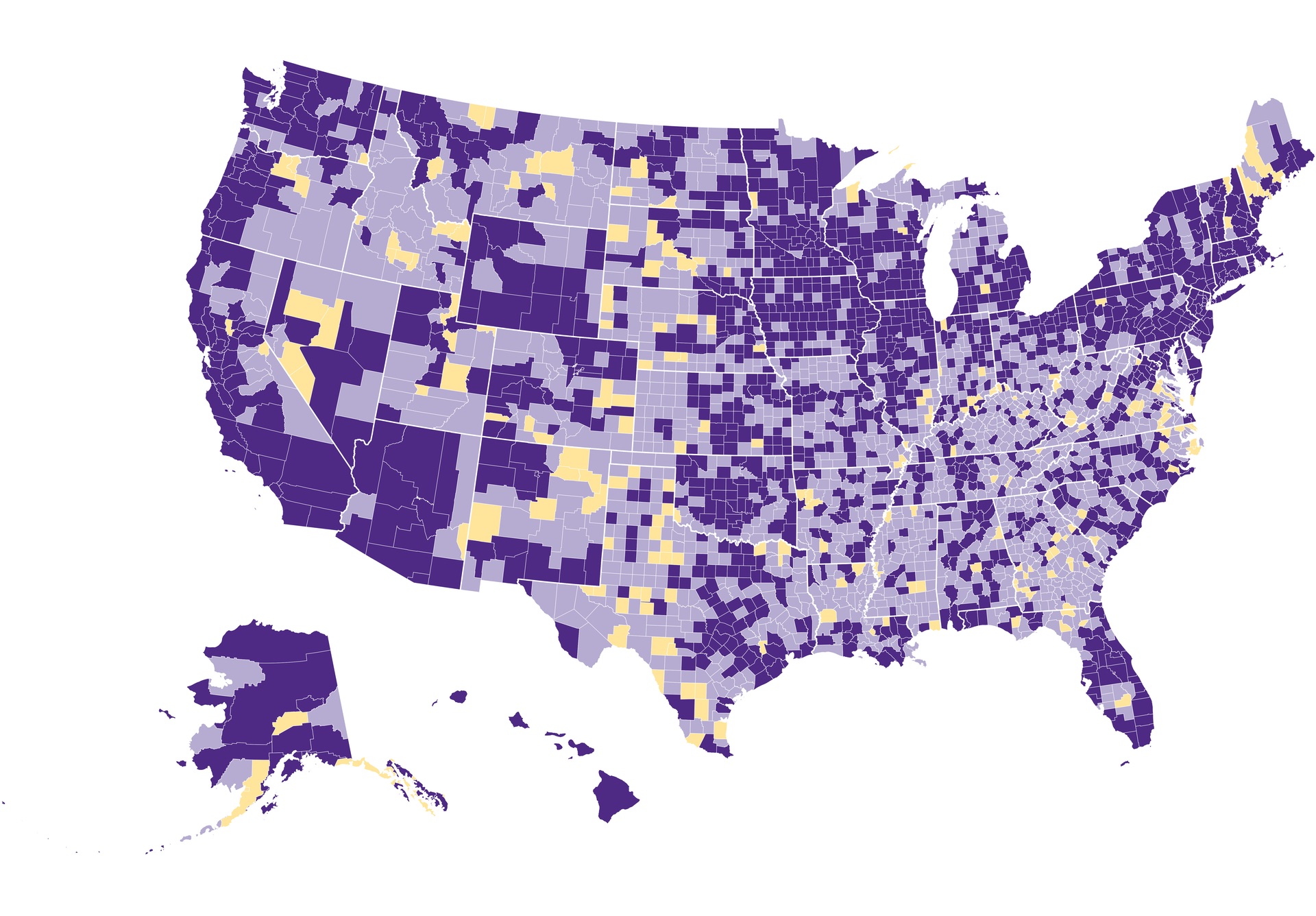

Meanwhile, in the US, 3,500 newspapers have gone, 60% of newsroom jobs have been eliminated. Search traffic to publishers is down 33% in a single year. And 74% of new web pages are now AI-generated.

The State of Local News

localnewsinitiative.northwestern.edu/projects/state-of-local-news/2025/report

Publishers need to fight back.

The temptation is to frame this as a morality play. But the more useful reading of the McGill data is strategic. If AI systems rely on journalism for factual depth, current events coverage and answer generation, then publishers hold something these platforms need.

The AI platforms are smart enough to know that without newsrooms and human-generated content, they will end up feeding off more and more of their own slop. The model then degrades and ends up eating its own tail. The ouroboros springs to mind.

Attribution alone is not the fix. A named source within a completed answer may still leave the publisher out of pocket if no one clicks through. Licensing alone is not the fix either, if the system is designed to minimise visible sourcing. Publishers may need to negotiate for both compensation and recognition standards that keep the reporting visible inside the product.

Of course, many publishers will eventually license, syndicate, or optimise for these systems. Some already are. The real argument is not whether AI uses journalism. It is whether journalism remains identifiable when it does.

The McGill numbers make that argument harder to wave away. 2,267 stories. 82 per cent non-attribution is a staggering number.

If AI can rely on reporting while hiding the reporter, journalism risks becoming critical infrastructure with no brand attached. Publishers have been in uncomfortable positions before. This one requires them to fight not just for payment, but for the simple fact of being named, recognised and fairly credited.

It was a pleasure to talk to Rob Kelly from Media and the Machine about my time at Dow Jones, how to survive and thrive in the AI era, and some advice for the next generation.

Mark Riley, CEO Mathison AI

AI and Journalism

This week’s best articles, as chosen by our editors

AI systems use Canadian journalism but seldom cite media sources: report A recent study reveals that AI systems heavily depend on Canadian journalism for information but fail to provide adequate recognition or compensation, raising concerns about the sustainability of quality journalism. The findings call for a reevaluation of the relationship between AI and journalism, emphasizing the need for fair compensation models to support original creators. |

Culture minister says ‘serious conversation’ needed about AI systems and news media CFJC Today Kamloops - March 17, 2026 On March 17, 2026, Canadian Culture Minister Marc Miller called for a serious dialogue on AI's use of news content, highlighting concerns about the lack of attribution and compensation for journalists, as revealed by a McGill University report. This follows a lawsuit by major Canadian news outlets against OpenAI for allegedly using their copyrighted material to train ChatGPT without permission, raising significant copyright issues in the evolving landscape of AI and journalism. |

AI news audit: AI, Canadian journalism and paths for policy action Editor and Publisher - March 17, 2026 A recent audit revealed that major AI models misused Canadian journalism, failing to provide source attribution 82% of the time and only explicitly attributing sources in 1% to 16% of cases. This highlights the urgent need for policy changes to govern the use of journalism by AI, emphasizing the importance of proper attribution and compensation for original content. |

AI and the Future of News 2026: what we learnt about its impact on newsrooms, fact-checking and news coverage Reuters Institute for the Study of Journalism - March 18, 2026 The "AI and the Future of News" conference brought together over 3,000 participants, including journalists and experts from the University of Oxford, to explore the challenges and opportunities AI presents in journalism, highlighting the need for clear communication, collaboration, and ethical considerations in reporting. Discussions ranged from enhancing investigative capabilities with AI tools to addressing the rise of misinformation, emphasizing the importance of regulation and accountability in the rapidly evolving media landscape. |

Senior European journalist suspended over AI-generated quotes The Guardian - March 20, 2026 Peter Vandermeersch, a senior journalist and former head of Irish operations at Mediahuis, has been suspended for inaccurately using AI tools like ChatGPT to summarise reports, leading to false quotes in his Substack newsletter. While he acknowledges the need for human oversight in journalism, he remains optimistic about AI's potential to enhance the field despite recognizing his flawed approach to its implementation. |

For AI Help, More College Students Ask Social Media First Inside Higher Ed | Higher Education News, Events and Jobs - March 20, 2026 A report highlights that 70% of college students use AI tools regularly for their education, with many preferring informal guidance sources like social media. Additionally, a significant interest in free local courses indicates a strong demand for accessible educational resources to foster community engagement and lifelong learning. |

Scrolling for the Truth: Using AI to Verify Scientific Claims on Social Media Stony Brook's Ritwik Banerjee and his team have developed innovative tools to verify the authenticity of scientific claims, tackling misinformation and enhancing the credibility of research. Their initiative aims to empower researchers, journalists, and the public with resources for assessing the integrity of scientific publications, fostering a culture of accountability and transparency in scientific discourse. |

Why Generative AI Threatens Creative Roles In Media And Entertainment Forbes - Generative AI is transforming the creative labor market by enhancing productivity and efficiency, potentially reducing demand for traditional roles. However, this technology may redefine creativity, emphasizing the need for human oversight, emotional intelligence, and unique personal styles that AI cannot replicate. |

AI Is Becoming the Operating Layer for Media and Entertainment TV Tech - March 19, 2026 Artificial intelligence is transforming media operations by automating processes like metadata tagging, quality control, and content localization, leading to enhanced decision-making and efficiency. As broadcasters integrate AI into their core infrastructure, they will leverage agentic systems that improve viewer engagement and streamline workflows, setting the stage for a new era in content production and distribution. |

Traffic is dying as a media metric. What comes next is more important In a crowded media landscape, audiences are increasingly drawn to authentic voices that reflect their own experiences and values, emphasizing the importance of diverse perspectives and genuine storytelling. While AI can aid in content creation, it is the human touch—empathy and personal connection—that truly captivates readers and fosters loyalty. |

News/Media Alliance Statement on White House National AI Legislative Framework The White House has introduced its National AI Legislative Framework, aiming to balance innovation with safety and ethical standards in artificial intelligence. Key focuses include transparency, bias mitigation, and collaboration across sectors to ensure responsible AI development while protecting civil rights. |

AI and Academic Publishing

This week’s best articles, as chosen by our editors

AI and Publishing: FAQ for Writers Jane Friedman - March 24, 2026 Explore the evolving legal landscape of generative AI and large language models in the U.S., where copyright, fair use, and author rights are increasingly complex. Key topics include the implications of AI-assisted work, recent court rulings, and the importance of transparency and certification to preserve human authorship in publishing. |

Frontiers’ AI tool aims to unlock lost research data Since its launch in October 2025, Frontiers FAIR² has transformed research data management by automating compliance with the FAIR principles, making datasets more accessible and reusable. This AI-driven tool streamlines organization and metadata generation, enabling researchers to share findings and collaborate seamlessly across disciplines. |

AI is here—publishers must help researchers use it responsibly Research Professional News - March 19, 2026 Elena Vicario calls for the scholarly publishing sector to adapt its policies in light of artificial intelligence advancements, highlighting the German Research Foundation's progressive stance on AI in funding reviews. As head of research integrity at Frontiers, she urges publishers to proactively integrate AI into their processes to stay relevant in an evolving landscape. |

Horror Novel ‘Shy Girl’ Canceled Over Suspected A.I. Use Nytimes - Hachette has chosen not to release a novel in the U.S. and will also halt its U.K. edition, citing a commitment to certain principles without detailing specific reasons. This decision raises important questions about publishing practices and the responsibilities of publishers in the current literary landscape. |

Research integrity is locked into an arms race with agentic AI slop - LSE Impact Lse - The convergence of advanced agentic AI and vast, accessible datasets is revolutionizing industries by enhancing decision-making and driving efficiencies. However, this evolution also necessitates the establishment of strong governance frameworks to address ethical concerns and ensure responsible use of these powerful technologies. |

A clarinetist, a high school student, and four climate deniers write a science paper, with a little help from AI… Controversial papers on climate change can fuel skepticism and misinformation, undermining public understanding of the overwhelming evidence for human-induced climate change. This underscores the need for critical evaluation of scientific literature and effective communication to combat misleading narratives. |

This newsletter was partly curated and summarised by AI agents, who can make mistakes. Check all important information. For any issues or inaccuracies, please notify us here

View our AI Ethics Policy