MediaMorph Edition 93 - by HANA News

AI ghostwriters - dead and alive

Was this newsletter forwarded to you? Sign up here

The written-by-a-human bit

One of the first party tricks that most people found on ChatGPT was the ability to rewrite this “in the style of x”. B-list journalists were bemused to find that their writing voice could be cloned, and were quick to tell everyone about it.

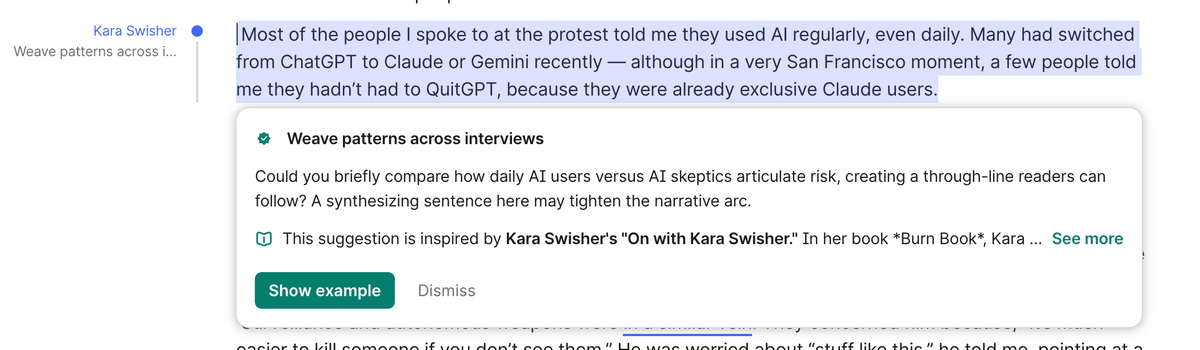

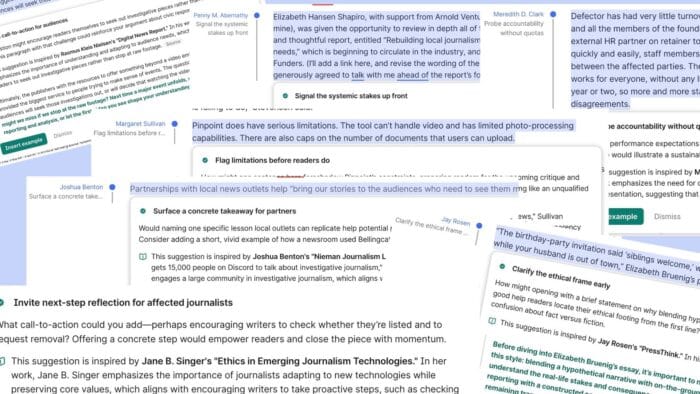

Fast forward three years, and A-list journalists are less impressed that they have been “recruited”, without permission, to a list of “expert advisers” by your friendly writing assistant, Grammarly.

Grammarly now offers a panel of expert ghostwriters to sit behind you and offer “expert advice” on how they would enhance your output. The roll call includes The Verge’s editor-in-chief, Nilay Patel, editor-at-large David Pierce, Casey Newton (see below) and Joanna Stern, former Verge writer Monica Chin, Wired’s Lauren Goode, Bloomberg’s Mark Gurman and Jason Schreier, The New York Times’ Kashmir Hill, plus Steven Pinker and Gary Marcus, among many others.

The technology is not all that new. What is new, however, is the blatant and shameless parading of talented authors and journalists’ names, front of house, embedded in a product, without so much as a please or thank you.

It is beyond parody that while the creative industry is fighting a rearguard action to safeguard copyright laws, mini-bots are popping up as AI impersonators of the actual authors. Expect massive pushback and retribution.

PS: Stephen King told me this was very well written

Happier days for the Daily Telegraph as they find a new berth at Axel Springer, who beat the Daily Mail Group with an eyewatering £575m counter-offer. While DMG is an AI-footdragger, Axel Springer CEO Döpfner is no slouch when it comes to AI adoption:

“Translation, orthographic error correction, fact-checking in general, aggregation of information that is out there, editing of that to a certain degree … layout, photo selection, production … the whole technological workflows of newsroom production, it’s all going to shift to artificial intelligence — and machines will do it better.”

“What journalists should focus on more is the old core of journalism … and that is reporting, seeing something that nobody else sees, corresponding, being at a place where nobody else is,” he added. “And most importantly, investigative journalism — in-depth research to find out something that was not supposed to be found out.”

Old hands at The Telegraph are about to learn some new tricks.

Tool of the week: GPT 5.3 Codex - a step change up in speed and performance for user-friendly coding and prototyping - even I managed to launch a new directory, Mathison Marketplace, a website for the coming zero-human startups.

Mark Riley, CEO Mathison AI

AI and Journalism

This week’s best articles, as chosen by our editors

Grammarly turned me into an AI editor against my will and I hate it Platformer - March 10, 2026 Grammarly's controversial new "expert review" feature, which falsely suggests insights from renowned professionals while relying on AI-generated content, has sparked outrage among writers like Kara Swisher for exploiting their names and expertise without consent. Meanwhile, Anthropic faces legal battles with the Pentagon over its AI technology's use in military operations, raising ethical concerns about AI's role in warfare and implications for the tech industry’s relationship with government contracts. |

A lot of journalism folks are offering editing advice as Grammarly’s AI “experts” Grammarly's new "Expert Review" feature controversially uses the names of real journalists and academics for AI-generated writing feedback without their consent, raising ethical concerns. In related discussions, industry figures examine the evolving landscape of journalism, addressing topics from the decline of national news to the impact of AI and sports betting on newsrooms. |

How Local Newsrooms Are Balancing AI Efficiency with Journalistic Integrity OCNJ Daily - March 9, 2026 Local journalism is increasingly integrating AI tools to enhance efficiency and expand coverage, while prioritizing editorial integrity and community engagement through human oversight. As newsrooms navigate this technological shift, they focus on transparency and ethical guidelines to maintain trust and authenticity in their reporting. |

The information ecosystem is being redrawn by AI. That might be good news Reuters Institute for the Study of Journalism - March 3, 2026 The landscape of journalism is undergoing transformative shifts as AI drives the move from scarcity to abundance and changes audience interactions, challenging traditional economic models. While these changes pose significant threats, they also present opportunities for innovation and deeper engagement, potentially expanding access to trustworthy information tailored to diverse user needs. |

New York’s FAIR News Act Would Set the Already Struggling Journalism Industry Back R Street Institute - March 5, 2026 The proposed FAIR News Act in New York could severely restrict journalism's use of AI by imposing compliance mandates that may divert resources from reporting, raise constitutional concerns, and hinder productivity in an industry already facing financial struggles. While the legislation aims to address valid issues surrounding AI, major news outlets are already implementing governance frameworks to ensure responsible integration and maintain reader trust. |

AI is forcing journalism to rediscover what the profession actually does South China Morning Post - March 3, 2026 The essence of journalism lies not in individual wordsmithing but in the rigorous verification of facts, much like how an architect ensures a building's safety. While AI can produce coherent text, it lacks the critical ability to discern truth, highlighting the indispensable role of human oversight in upholding journalistic integrity. |

Hyperlocal AI with a million subscribers. Cjr - Patch, led by CEO Warren St. John, is dedicated to delivering hyper-local news and fostering community engagement, addressing the information needs of individual neighborhoods. Through its daily newsletter, Patch connects residents with relevant local content, highlighting the importance of smaller communities in journalism today. |

Growing more complex by the day: How should journalists govern use of AI in their products? The news industry is undergoing a transformation with the rise of artificial intelligence, enhancing content creation and personalized delivery while raising concerns about job displacement and misinformation. As media organizations navigate this complex landscape, striking a balance between AI innovation and journalistic integrity will be crucial for the future of news. |

What do the public think of AI-written articles – and should journalists have to disclose how they’ve used the technology? Yougov - A recent YouGov survey reveals that while 26% of Britons believe they've read AI-written news articles, a staggering 72% are hesitant to engage with such content, highlighting a strong demand for transparency about AI's role in media. Notably, 91% of respondents advocate for disclosure when AI is involved in generating articles or opinion pieces, reflecting widespread skepticism and the desire for clarity in journalism. |

Manager at Associated Press Tells Journalists That Resistance to AI Is Futile Futurism - March 7, 2026 Aimee Rinehart of the Associated Press faced backlash for suggesting that resistance to AI in journalism is "futile," sparking concerns among reporters about the integrity of human writing. This controversy highlights the growing tension between newsrooms experimenting with AI and journalists' worries over its reliability and impact on traditional reporting. |

Artificial Intelligence and local journalism Left Hand Valley Courier - March 4, 2026 Local newspapers in Boulder County are grappling with the rise of AI in journalism, exemplified by the challenges faced by Scott Converse's Longmont News Network, which has drawn criticism for inaccuracies and a lack of depth. While some see potential in AI to assist reporters, editors emphasize the importance of human oversight to maintain accountability and community engagement in news reporting. |

Szostak: AI is journalism’s kryptonite, not its tool The Rocky Mountain Collegian - March 10, 2026 The rise of AI in journalism raises significant concerns about accuracy and integrity, as studies show many journalists rely on AI-generated content that often lacks truthfulness. To preserve trust in journalism and ensure factual reporting, it's essential to impose restrictions on AI usage, as exemplified by The Collegian's commitment to accurate news. |

AI and Academic Publishing

This week’s best articles, as chosen by our editors

Journal Submissions Riddled With AI-Created Fake Citations Inside Higher Ed | Higher Education News, Events and Jobs - March 6, 2026 The rise of AI-generated phantom citations in academia, exemplified by non-existent papers and references being mistakenly cited by scholars, raises serious concerns about the integrity of scholarly work. As journals grapple with this issue, the pressure to publish may lead researchers to prioritize speed over quality, potentially undermining the value of academic contributions. |

Publishers call for AI transparency and copyright protection Research Information - March 5, 2026 The Publishers Association is urging the UK government to reject copyright exceptions for AI and enforce transparency for developers, as highlighted in their report on the thriving AI licensing market. With all major UK academic publishers expected to engage by 2026, the report underscores the country's competitive edge in high-quality content creation amid growing demand in AI development. |

Publishing in the Era of Artificial Intelligence: What Do Medical Research Journals Expect? Cureus - March 9, 2026 Cureus offers unique advertising and sponsorship opportunities to engage with influential healthcare specialists, ensuring quick publication of high-quality, peer-reviewed content. Partnering with Cureus enhances your brand visibility and fosters meaningful connections within targeted demographics in the medical field. |

Scientists are failing to disclose their use of AI despite journal mandates, finds study – Physics World Physics World - March 5, 2026 A recent study of over 5.2 million academic papers reveals a significant rise in AI tool usage, particularly in physics, yet only 0.1% disclose this assistance, highlighting a troubling "transparency gap." Researchers advocate for a shift towards proactive engagement with AI, promoting ethical frameworks and institutional innovation to enhance scientific integrity and drive advancements in research. |

Weekend reads: The LLMs ‘willing to commit academic fraud’; ‘peer replication’ instead of review; a ‘spam filter’ for predatory journals Retraction Watch - March 7, 2026 This week at Retraction Watch, significant developments in academic publishing include the removal of a controversial vaccine study preprint, a medical journal's admission of fictional case reports, and discussions on the challenges of peer replication and research integrity. Notably, a podcast episode examines cheating in science, while publishers seek to combat paper mills and assess AI's environmental impact in research. |

Publishers sue 'shadow' library allegedly powering AI chatbots Reuters - The "Big Five" publishers—Hachette, Penguin Random House, HarperCollins, Simon & Schuster, and Macmillan—are joining forces to tackle key industry challenges like diversity, digital sales strategies, and fair author compensation, aiming to adapt to the evolving publishing landscape and ensure a sustainable future. Through collaboration, they hope to strengthen their market positions and elevate industry standards. |

Ministers urged not to allow data mining of academic literature Research Professional News - March 4, 2026 The Publishers Association in the UK is urging the government to reject a controversial copyright exception for AI companies that would allow them to mine scholarly literature without licenses, stressing that such a move could undermine licensing markets and favor US tech giants. Advocating for stricter transparency rules for AI developers, they aim to protect intellectual property rights while fostering innovation and trust in AI systems. |

This newsletter was partly curated and summarised by AI agents, who can make mistakes. Check all important information. For any issues or inaccuracies, please notify us here

View our AI Ethics Policy