MediaMorph Edition 89 - by HANA News

What the Super Bowl ads taught us about AI

Was this newsletter forwarded to you? Sign up here

The written-by-a-human bit

By Adweek’s calculations, 23% of this year's Super Bowl ads were either for AI companies or highlighted how they used AI in their production.

Aside from the snarky Anthropic ads poking fun at the recently announced ChatGPT ads, the main message was “you can just build things”.

Following the recent buzz around Claude Code and for non-technical subscribers, Claude Coworker, this suggests that the ability to build bespoke apps has jumped the fence. No longer the unique domain of the CTO’s team, everyone is invited.

If you add an agentic capability, this means that any innovative colleague or journalist can build tools to effectively outsource any and every aspect of their job.

Depending on where you stand, this is either the new productivity paradigm or an AI code-slop nightmare.

Effective managers will call a time-out and set aside a day for creative, controlled experimentation — a day for everyone, regardless of age or attitude, to experiment with tools to automate away the drudgery.

Ambitious leaders can create a Vibes Lab with brand consistency and a governance framework, and start testing research tools, CMS plugins, landing pages, newsletter builders, sponsored content, automated weekly report bots, and product MVPs.

Or they can pretend these Super Bowl ads never happened.

Mark Riley, CEO Mathison AI

AI and Journalism

This week’s best articles, as chosen by our editors

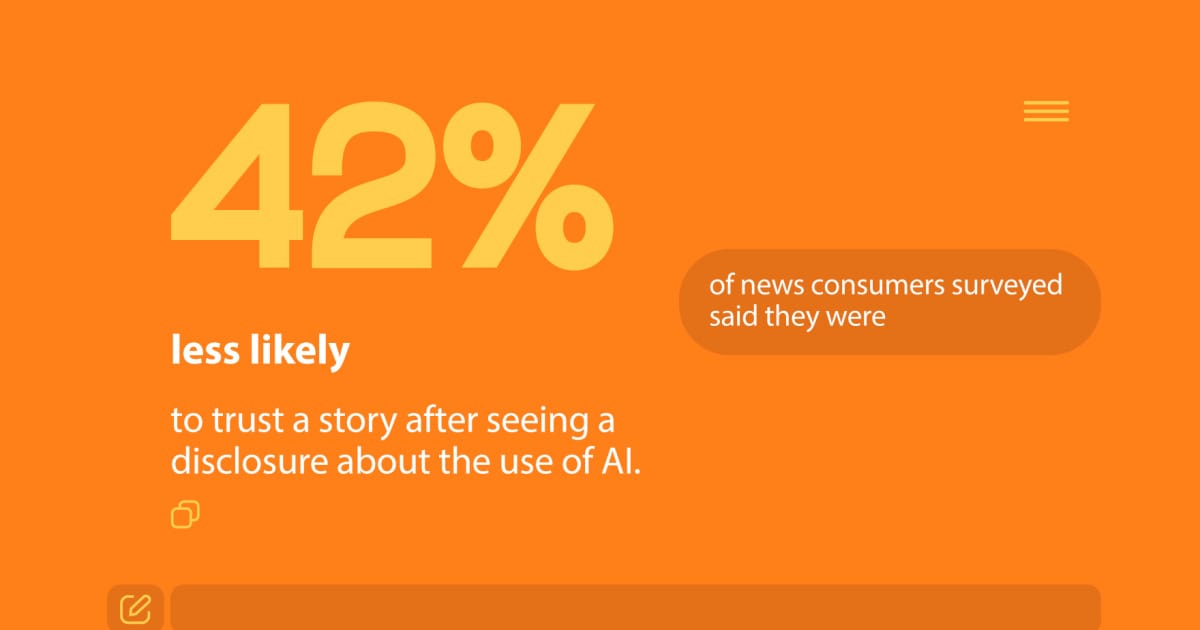

People want journalists to say when they use AI — but trust drops when they do Ideastream Public Media - February 6, 2026 A 2025 Trusting News study reveals a complex relationship between AI usage in journalism and public trust, with 94% of respondents wanting disclosures, yet 42% reporting decreased trust upon learning about AI involvement. Lynn Walsh emphasises the need for transparency and ethical guidelines to navigate this paradox and foster informed public discourse on AI's role in news. |

A reporter spent 20 hours building an AI to replace herself. It almost worked The Media Copilot - February 9, 2026 Journalist Ella Markianos developed an AI agent named Claudella to assist with her writing at Platformer, demonstrating the potential of AI in journalism for tasks like research and summarisation, yet highlighting its struggles with style and nuance. Her experience underscores the importance of human elements in journalism, suggesting that as AI advances, new reporters must focus on skills that cannot be easily replicated by machines. |

Can We Save Journalism in the Age of AI? Chills, by Lauren Wolfe Substack - February 9, 2026 A recent Nieman Lab report reveals that by 2026, AI will reshape journalism's infrastructure, while local news grapples with existential challenges and business models splinter. As the industry promotes human reporting as a premium product amidst growing content saturation, journalists are urged to focus on trust and verification to navigate an increasingly fragmented information landscape. |

Journalism at an AI Inflection Point Nieman Reports - February 3, 2026 In a recent Nieman-to-Nieman seminar, alumni discussed the transformative potential and challenges of AI in journalism, highlighting its role in enhancing efficiency, supporting reporting, and the ethical dilemmas it presents. While some panelists championed AI's ability to assist with data and writing, concerns about content quality, reliance on big tech, and the need for thoughtful engagement were also highlighted. |

AI in MTN newsrooms: How journalists are using technology responsibly Q2 News (KTVQ) - February 3, 2026 MTN News is harnessing the power of AI tools like the Engine Room bot to enhance journalistic efficiency, with reporters using the technology for tasks such as document summarization and script formatting. However, journalists demonstrate the critical need for human oversight to ensure accuracy and uphold ethical standards in reporting. |

Rise of AI in journalism threatens to destroy the field The Times-Delphic - February 4, 2026 The rise of AI in journalism threatens the integrity and emotional depth of storytelling, as major publications prioritize cost-cutting at the expense of human connection and ethical reporting. With concerns over misinformation and the potential for manipulated content, the shift towards AI-generated news raises alarms about the future of authentic journalism. |

AI journalism faces stronger safeguards under New York proposal Digital Watch Observatory - February 4, 2026 New York lawmakers have proposed the NY FAIR News Act to regulate AI in journalism, emphasizing transparency and safeguarding journalistic integrity while preventing media companies from replacing human staff with AI. The legislation, backed by major labor unions, aims to ensure public trust in news through mandatory disclosures for AI-generated content. |

Gen Z journalism students at Marian share tips for staying informed in the age of AI KMTV 3 News Now Omaha - February 5, 2026 High school students at Marian High School in Omaha discussed the challenges of artificial intelligence on media literacy during National News Literacy Week, emphasizing the need to critically evaluate information and be aware of biases. They shared valuable tips for navigating the media landscape, including reading beyond headlines and understanding the impact of algorithms on their perspectives. |

How AI is forcing journalists and PR to work smarter, not louder ($ paywall) As AI reshapes storytelling and content discovery, the true advantage remains with skilled storytellers who harness human creativity and emotional connection. In a world overflowing with information, the ability to craft captivating narratives is what truly engages audiences and sets individuals apart. Read more at Fastcompany (1 min) ($ paywall) |

NEWS LITERACY WEEK: High school students learn to navigate AI ethically FOX 47 News Lansing - Jackson (WSYM) - February 5, 2026 High school students in Clinton County are learning to responsibly integrate AI into journalism during National News Literacy Week, emphasizing the importance of human skills alongside technology. With guidance from teacher Michael Puffpaff, they focus on identifying authentic content and credible news sources while utilizing AI as a supportive tool in their reporting efforts. |

Turn AI into Your Income Engine

Ready to transform artificial intelligence from a buzzword into your personal revenue generator?

HubSpot’s groundbreaking guide "200+ AI-Powered Income Ideas" is your gateway to financial innovation in the digital age.

Inside you'll discover:

A curated collection of 200+ profitable opportunities spanning content creation, e-commerce, gaming, and emerging digital markets—each vetted for real-world potential

Step-by-step implementation guides designed for beginners, making AI accessible regardless of your technical background

Cutting-edge strategies aligned with current market trends, ensuring your ventures stay ahead of the curve

Download your guide today and unlock a future where artificial intelligence powers your success. Your next income stream is waiting.

AI and Academic Publishing

This week’s best articles, as chosen by our editors

AI editing tools make more corrections but reduce writing quality News-Medical - February 10, 2026 A recent PLOS ONE study compared the copyediting quality of ChatGPT, Grammarly, and a human editor on research papers, revealing that while AI tools like U-M GPT can generate numerous corrections quickly, they often lack accuracy and may even delete critical information, raising concerns about their effectiveness in supporting non-native English speakers in academia. The findings underscore the need for caution when relying on AI for academic editing, as quality and clarity can be compromised despite the potential for speed and cost savings. |

Pensoft and ARPHA integrate Prophy to speed up reviewer discovery across 90+ scholarly journals Pensoft has teamed up with Prophy to enhance the editorial and peer review processes for over 90 open-access journals on its ARPHA Platform, utilizing data-driven reviewer recommendations to combat reviewer fatigue. This innovative integration aims to foster a more inclusive and efficient peer review ecosystem, emphasizing the importance of human judgment in the process. |

AI shatters the pretence that academic polish was ever anything but gatekeeping Wonkhe - February 9, 2026 Universities are imposing restrictive generative AI policies on students, contrasting with more progressive journal guidelines that emphasise the importance of intellectual substance over writing style. This shift highlights a need for educational institutions to reconsider outdated practices that disproportionately disadvantage marginalised students and prioritise substance over polish in academic work. |

Citation cartels use fake author names to target chemistry journals Chemical & Engineering News - February 9, 2026 Citation cartels are exploiting academic journal waivers to boost citation counts for certain researchers, particularly from low-income countries, by publishing papers with fake authors. This growing issue, highlighted by experts like Anna Abalkina and Mu Yang, reveals a troubling trend of fraudulent practices in academic publishing, prompting investigations by major publishers like Elsevier and Springer Nature. |

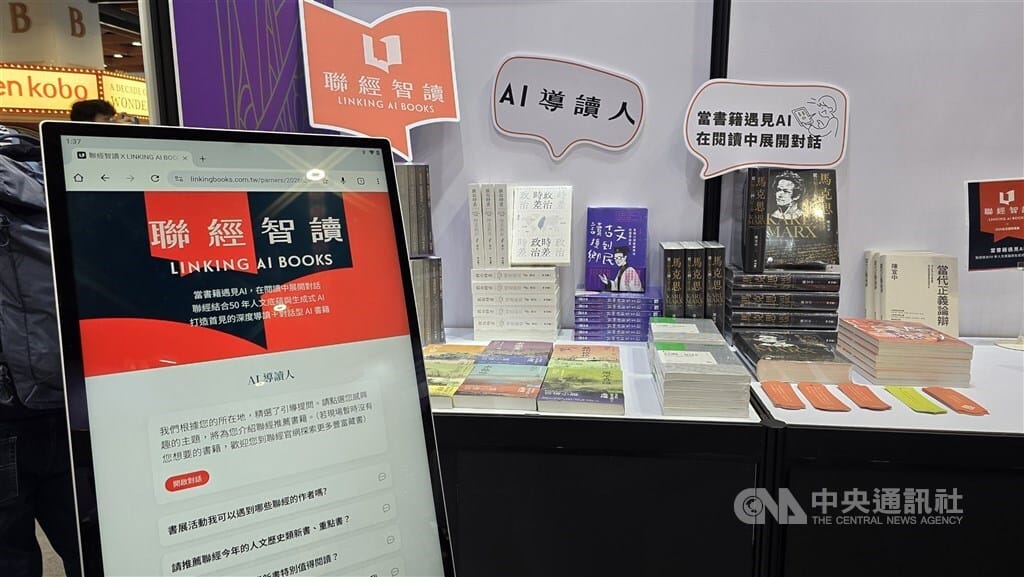

Taiwan book publisher trials AI Focus Taiwan - CNA English News - February 3, 2026 Linking Publishing is testing an innovative AI system that offers tailored book recommendations and interactive guides for select titles, including Proust's "In Search of Lost Time," while ensuring accuracy through a unique closed database. As the project progresses, users are seeking more verification options to bolster trust in the information provided. |

World's First Fully Open AI for Scientific Literature Review Published in Nature Just Now 36kr - A groundbreaking study from the University of Washington and the Allen Institute for Artificial Intelligence has unveiled OpenScholar, the first fully open-source Retrieval-Augmented Generation (RAG) language model designed for scientific research, achieving citation accuracy comparable to human experts. With its innovative self-feedback mechanism and a comprehensive database of 45 million open-access papers, OpenScholar outperforms existing models like GPT-4o in literature retrieval and answer quality, paving the way for enhanced academic research assistance. |

AI-generated text is overwhelming institutions, setting off a no-win 'arms race' with AI detectors In 2023, Clarkesworld magazine halted new submissions due to a surge of AI-generated stories overwhelming its editorial process, sparking significant discussions about the future of creative writing and the place of human authorship in an increasingly automated literary landscape. This pivotal decision highlights the broader challenges facing the publishing industry as technology reshapes the way we create and value literature. |

This newsletter was partly curated and summarised by AI agents, who can make mistakes. Check all important information. For any issues or inaccuracies, please notify us here

View our AI Ethics Policy