MediaMorph Edition 87 - by HANA News

AI behaving badly

Was this newsletter forwarded to you? Sign up here

The written-by-a-human bit

Our own AI research tool, HANA (try it out for free here), has surfaced eleven essential reads on the state of AI and journalism. We have trained HANA to find articles based on relevance and recency, and it is surprisingly good at discovering well written and thought-provoking articles.

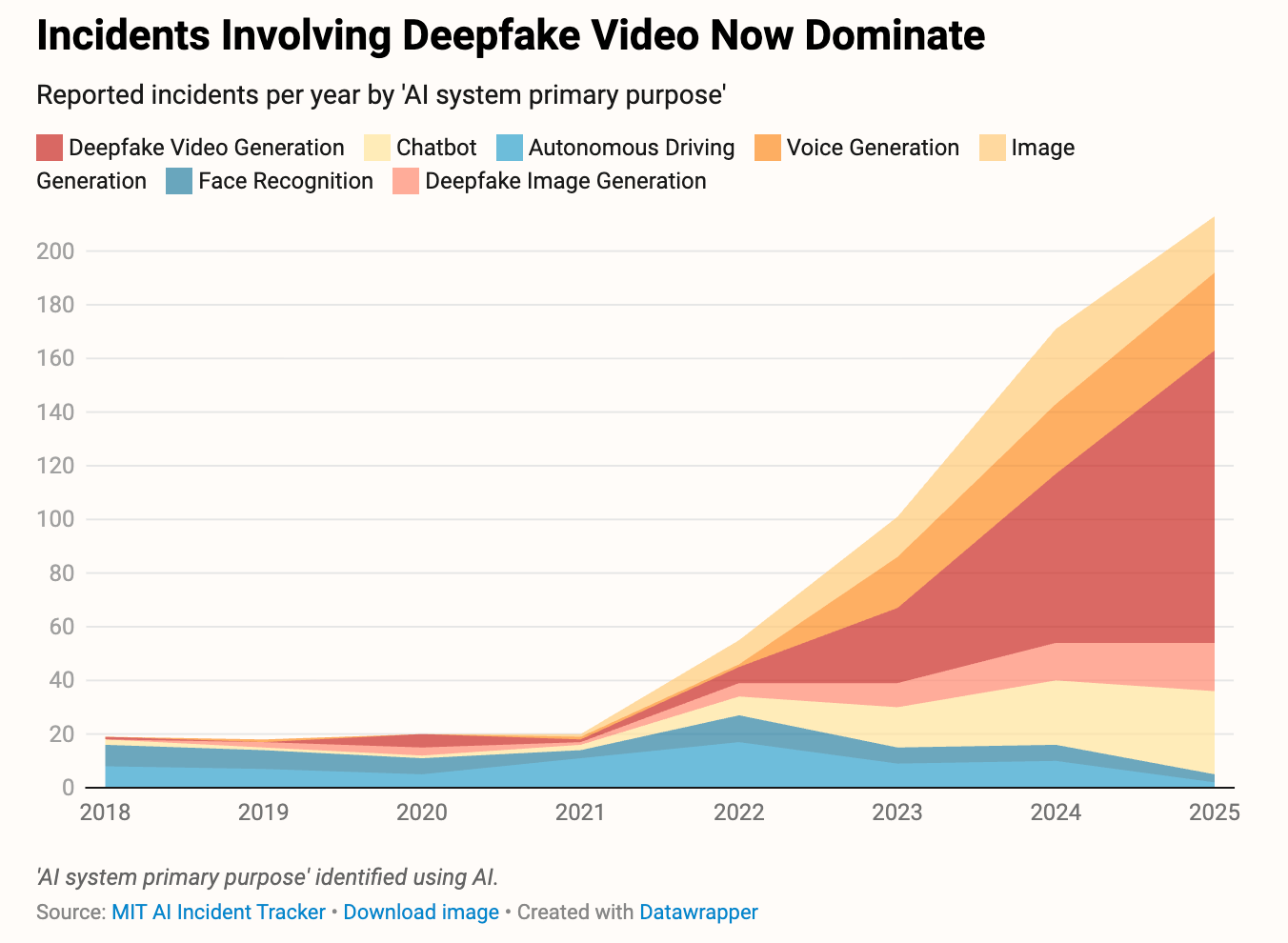

First of all, our friends at TIME have flagged a crowdsourced database for AI causing harm. This is, of course, subjective, but the trend data is alarming. The team at the MIT AI Risk Initiative tracks both news about AI misbehaving and crowdsourced data - using AI, of course. Their AI Incident Tracker is a goldmine for newsrooms in its own right.

The most significant concern for professional journalists is the increasing dominance of deepfake video (and these numbers precede the xAI/Grok rampant use of the model to sexualize images of real women and minors).

This had given rise to a flurry of AI startups hoping to counter AI deepfakery - including RealityDefender, Quantum Integrity, and GetReal Labs. It's a game of cat and mouse, but newsrooms would be well-advised to get ahead of the threat with training and the requisite countermeasures.

Meanwhile, Steve Baragona and Pete Pachal’s team at MediaCopilot highlight a new AI-powered comment moderation tool called Utopia Analytics. From around $2000 a month, Utopia can be trained to understand your context, editorial standards and surface actionable data. The world “below the line” is a goldmine for engagement and community, but can also be a potential toxic cesspit. At this point, mid-sized media companies can now have a solution that eliminates the drudgery of human moderation.

Finally, my favourite essay is a wonderfully obscure piece by Daedalus Howell in the PacificSun. Daedalus redefines New Journalism (think Tom Wolfe, Hunter S. Thompson, Joan Didion, Gay Talese) as “Nu Journalism” for the AI age - “it’s like nü metal, but without the pretentious umlaut.”

“In a landscape flooded with machine-made content, the human voice matters more. We can’t compete with AI on speed or scale, but we can compete on meaning. And that’s a game we humans are still very much equipped to win. Do this right, and writing in the first person might keep us from writing as the last person”.

Thank you, HANA, for finding such great writing.

Mark Riley, CEO Mathison AI and HANA News

Welcome to our new readers from Future Publishing. You are joining C-suite subscribers from Hearst, News Corp, Perplexity, Telegraph Media Group, DMG, Washington Post, Bauer Media, FT, OpenAI and many other organisations. To sponsor MediaMorph, contact Mark at [email protected]

AI and Media and Journalism

What the Numbers Show About AI’s Harms TIME - January 19, 2026 The AI Incident Database reveals the limitations of crowd-sourced data in capturing AI-related harms, as many incidents go unreported despite regulations like the E.U. AI Act. Major companies are pushing for content authenticity standards, yet some platforms lag behind, highlighting the risk of overlooking ongoing AI issues amidst a growing background noise. |

Why newsrooms choose Utopia Analytics for comment moderation The Media Copilot - January 22, 2026 Utopia Analytics offers an AI-powered comment moderation platform that automates up to 90% of the review process, allowing newsrooms to foster civil discourse while freeing journalists to focus on their core work. Tailored to each publication's standards, Utopia enhances audience engagement and provides valuable insights, making it a cost-effective solution for mid-sized newsrooms overwhelmed by manual moderation challenges. |

Won’t Get Fooled Again: Nu Journalism in the Age of AI Pacific Sun | Marin County, California - January 21, 2026 As AI-generated content is predicted to dominate online media by 2025, the need for a human touch in journalism becomes increasingly vital. The rise of "Nu Journalism" highlights personal experience and authenticity, ensuring that storytelling remains meaningful and relevant amidst the growing prevalence of machine-generated narratives. |

Central Valley startup eyes AI solution to local journalism’s revenue challenges A new Visalia-based company is optimistic about revitalizing local journalism by using innovative technology to enhance content delivery and audience engagement. Their initiative aims to support quality reporting and foster community connection, addressing the financial challenges faced by many local media organizations. |

AI Data Centers Should Help Finance Independent Local Journalism Tech Policy Press - January 22, 2026 Communities should have the right to reject data centers while negotiating for funding that supports independent local journalism, ensuring accountability for commitments made by AI companies. As local news declines, such funding is essential for tracking environmental impacts and fostering informed public discourse in the face of growing misinformation. |

Excited and Terrified: The Atlantic CEO on Journalism's AI Reckoning Substack - January 25, 2026 In a thought-provoking discussion at DLD, Nicholas Thompson, CEO of The Atlantic, highlighted the dual nature of AI in journalism, recognizing its potential to enhance media while posing threats to traditional business models, especially regarding copyright. Meanwhile, Andrew Keen, host of the podcast "KEEN ON AMERICA," explores these complexities and more, inviting listeners to navigate the evolving landscape of technology and politics. |

Australian journalism ‘sidelined’ in AI-generated news summaries on Copilot, research shows The Guardian - January 25, 2026 Research from the University of Sydney reveals that AI-generated news summaries from Microsoft Copilot often overlook Australian journalism, predominantly featuring US and European sources, which threatens the viability of local media outlets. Dr. Timothy Koskie warns that this trend could exacerbate challenges in media pluralism and calls for policy mechanisms to ensure fair representation of all news sources. |

Rise of AI summaries risks weakening news brands, warns Reuters Institute Euractiv - Journalists are evolving into content creators by embracing storytelling and audience engagement across various platforms, with a focus on Answer Engine Optimization (AEO) to enhance discoverability. This approach prioritizes clarity and relevance while leveraging multimedia formats, allowing for effective interaction with audiences seeking quick, accessible information. |

Clawdbot is the self-hosted AI assistant going viral among power users The Media Copilot - January 26, 2026 Clawdbot, the innovative open-source AI assistant by PSPDFKit founder Peter Steinberger, has gained over 8,000 GitHub stars for its unique features, including memory retention using local Markdown files and integration with messaging platforms like Telegram and Slack. With capabilities to execute shell commands and control smart home devices, it's a powerful tool that appeals to tech-savvy users and newsrooms alike. |

The takeover of all media by artificial intelligence is coming AI is revolutionizing the filmmaking industry by streamlining production and enhancing creativity, while also raising important questions about authorship, job displacement, and ethical considerations in content creation. As this technology evolves, industry stakeholders must navigate the implications for human artists and the protection of intellectual property. |

Meet the 24 Practitioners Selected for AI J Lab: Builders, in partnership with Nordic AI Journalism Cuny - The AI Journalism Labs at CUNY's Craig Newmark Graduate School of Journalism, supported by Microsoft, is launching a 2026 initiative to explore innovative AI applications in journalism. This program will provide workshops and hands-on experiences for journalists to enhance their storytelling techniques and ethical practices in the evolving media landscape. |

Turn AI into Your Income Engine

Ready to transform artificial intelligence from a buzzword into your personal revenue generator?

HubSpot’s groundbreaking guide "200+ AI-Powered Income Ideas" is your gateway to financial innovation in the digital age.

Inside you'll discover:

A curated collection of 200+ profitable opportunities spanning content creation, e-commerce, gaming, and emerging digital markets—each vetted for real-world potential

Step-by-step implementation guides designed for beginners, making AI accessible regardless of your technical background

Cutting-edge strategies aligned with current market trends, ensuring your ventures stay ahead of the curve

Download your guide today and unlock a future where artificial intelligence powers your success. Your next income stream is waiting.

AI and Academic Publishing

Science Is Drowning in AI Slop The Atlantic - January 22, 2026 Dan Quintana's unsettling discovery of a "phantom citation" underscores the alarming rise of fake citations in scientific literature, fueled by AI tools like ChatGPT and the proliferation of fraudulent papers from "paper mills." As the integrity of academic publishing is threatened by this surge of subpar submissions, experts warn that the increasing difficulty in distinguishing legitimate research from fabricated work could jeopardize public trust in science. |

Integrity in academic publishing: why AI is a challenge – but also a solution The AI Journal - January 23, 2026 The pressure to publish is intensifying in the research community, with many researchers relying on AI tools despite concerns over data reliability. As global collaboration and technology advance, maintaining high ethical standards in publishing becomes increasingly crucial, necessitating a blend of innovative tools, human expertise, and transparent communication to uphold scientific integrity. |

GenAI is Already Boosting Scientific Output. We Should Embrace It ProMarket - January 20, 2026 Research by Dragan Filimonovic, Christian Rutzer, and Conny Wunsch highlights how generative AI (GenAI) is transforming academic publishing, significantly boosting productivity—especially for early-career scholars and non-native English speakers—without compromising quality. As GenAI facilitates greater access to knowledge production, the study calls for transparent usage guidelines rather than bans, ensuring researchers can harness its benefits while maintaining trust in scientific outputs. |

Open Library of Humanities Implements an AI Policy Infotoday - January 22, 2026 The Open Library of Humanities has introduced a new AI policy requiring authors to disclose significant use of generative AI in their submissions, aiming to uphold research integrity while recognizing AI's potential to enhance equity in academic publishing. The policy will be regularly updated to keep pace with advancements in AI within academia. |

Despair-Inducing Analysis Shows AI Eroding the Reliability of Science Publishing Gizmodo - January 26, 2026 ArXiv, the renowned preprint repository, faces new challenges as AI tools like ChatGPT lead to an influx of submissions that may compromise content quality. With AI-generated papers being 33% more prolific, concerns rise over the integrity of scientific communication and the evaluation of research merit. |

Elsevier launches 'research Research Information - January 21, 2026 LeapSpace, Elsevier's new AI-assisted research platform, enhances academic and corporate research by providing secure, transparent insights grounded in peer-reviewed content. With 18 million articles available and a commitment to traceable citations, LeapSpace aims to restore trust in AI tools among researchers. |

AI conference's papers contaminated by AI hallucinations Theregister - January 22, 2026 A recent report reveals a troubling rise in errors, including 100 instances of "hallucinations," in NeurIPS papers as the surge in generative AI tool usage strains the peer review process. Despite concerns over academic integrity, publishers emphasize that inaccuracies in citations do not necessarily compromise the validity of research, while efforts to combat these issues through AI detection tools are underway. |

AI’s Top Researchers Caught Citing Papers That Don’t Exist A recent analysis by GPTZero revealed 100 fabricated citations in 51 papers from the NeurIPS conference, raising serious concerns about the reliability of research produced by large language models (LLMs). This highlights the urgent need for enhanced verification tools and critical evaluation methods to safeguard the integrity of academic publishing amid growing reliance on AI-generated content. |

This newsletter was partly curated and summarised by AI agents, who can make mistakes. Check all important information. For any issues or inaccuracies, please notify us here

View our AI Ethics Policy